The Aggregate transformation in Azure Data Factory defines aggregations of columns in your data streams.

Using the Expression Builder, you can define different types of aggregations such as SUM, MIN, MAX, and COUNT grouped by existing or computed columns.

You can select an existing column or create a new computed column to use as a group by clause for your aggregation.

As you can see, here we have one blob file named RegionSales.txt.

Let’s display the Region by Total Sales, means group the file data based on Region column and aggregate the Sales values as shown below.

Aggregate transformation

Log into Azure Data Factory Portal, click on Author tab and create a New pipeline.

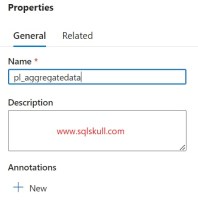

Now provide the Name and Description for pipeline. Description is an optional.

Now, ceate a Data Flow, click on create New data flow.

Now provide the name for Data flow.

Next, add source. Select Add source to start configuring your source transformation.

Now under Source settings tab, define dataset. You can select an existing dataset or create a new one if have not created one.

Let’s create a new dataset, click on New button.

After that, a New dataset window opens. Select Azure Blob Storage as our source file is stored in blob storage then click on Continue button.

Next, it asks you to select file format, DelimitedText, after that click on Continue button.

Once you click on Continue button. It asks you to define New linked service, provide the Name, Subscription, Storage account name as shown below then click on Create button.

After, select the source file location as shown below.

Now, you can see the dataset name, Linked service details and file path. You can verify them, if all corrects click on Ok button else you can modify them.

So far, we have defined the source data. Now we perform the aggregation on source data for that we need to add Aggregate transformation which will help us to aggregate the data.

Click on + icon then select Aggregate transformation.

Now, we need to define Aggregate settings.

In Output stream name, provide the name and description for your aggregate transformation.

In Incoming stream, this will be our source dataset that is Source1.

In Group by, select the column based on that we want to group data. As we want to see Region by total sales. So, Group by column will be Region.

Select the Region in dropdown.

After that, click on Aggregates. Here, we define the aggregated column name and expression to get the aggregated values.

In column dropdown, just write the aggregated column name. For expression just select Expression box and click on Open expression builder link.

Now, Dataflow expression builder window opens. As we want to aggregate the sales data by region so the expression will be sum of Sales(in USD).

After that click on Save and finish button.

Now we have defined the Aggregate settings.

You can see the Data preview but make sure you have enabled the Data flow debug option first, if not enable it.

Then click on Data preview tab, then click on Refresh button.

And you can see, it aggregates the data and show region by total sales.

Now, you can select the pipeline and drag the dataflow to the pipeline canvas.

Now you can add subsequent activities in pipeline to use this aggregated data.

May be you can save this aggregated data into a destination store by using the sink transformation as shown below.

Also Read..

Create Azure Data Factory using Azure Portal

Azure Data Factory ETL – Load Blob Storage to Azure SQL Table

Pivot Transformation Using Data Flow Activity

Wait Activity in Azure Data Factory

Copy multiple files from one folder to another using ForEach loop activity

Delete files from folder using Delete Activity

Create a Filter Activity in Azure Data Factory

Copy sample data using copy data activity in Microsoft Fabric

![]()