Using SQL Server BULK INSERT(BCP) statement you can perform large imports of data from text, or Csv files to SQL Server table, or Views. Basically, to perform BULK INSERT, you need a Source (.CSV, .txt File) and a Target (SQL table, view).

Lets see, how to use BULK INSERT statement to Load data from CSV Files to SQL Server Table.

Here we have a .CSV format file named as TransactionHistory.csv, which contains 113444 rows and this is our source file.

Source file is placed at path – C:\Users\prade\Desktop\Data\TransactionHistory.csv, this path we will be used later in BULK INSERT statement.

Now we need to create a Target that is SQL table to load this data.

Lets create a table in SQL Server, and give it name as TranHistory.

CREATE TABLE TranHistory (TransactionID INT, ProductID INT, ReferenceOrderID INT, ReferecnceOrderLineID INT, TransactionDate Datetime, TransactionType CHAR(1), Quantity Numeric(9,2), ActualCost Numeric(9,2), ModifiedDate Datetime)

Now we have a table in SQL Server as you can see in below screenshot.

To Load the file’s (Source) data To SQL table (Target), write a following BULK INSERT Statement, and execute it.

BULK INSERT TranHistory FROM 'C:\Users\prade\Desktop\Data\TransactionHistory.csv' WITH (FIRSTROW = 2, FIELDTERMINATOR = ',', ROWTERMINATOR='\n'

Lets understand the syntax that is used in BULK INSERT Statement.

As a first argument to BULK INSERT, is Target name that is SQL table TranHistory. It means we want bulk import data into this table. Instead of table name you can also use view.

Next, provided a file path as second argument that is Source to BULK INSERT. It means this is the full path of the data file that contains data to import into the specified table or view.

After that specified a value 2 for option FIRSTROW that is FIRSTROW =2, that means we want to skip first row from source file. As first row contains column header that we do not want to load in SQL table.

Next specified the file format, that depends upon the type of the file, you may want to specify the row delimiter and column delimiter.

As we have a comma separated file so provided a FIELDTERMINATOR as ‘,’ and ROWTERMINATOR as ‘\n’ that is the default row terminator.

You can see, after executing BULK INSERT statement, a message says 112444 rows affected that means BULK INSERT statement executed successfully.

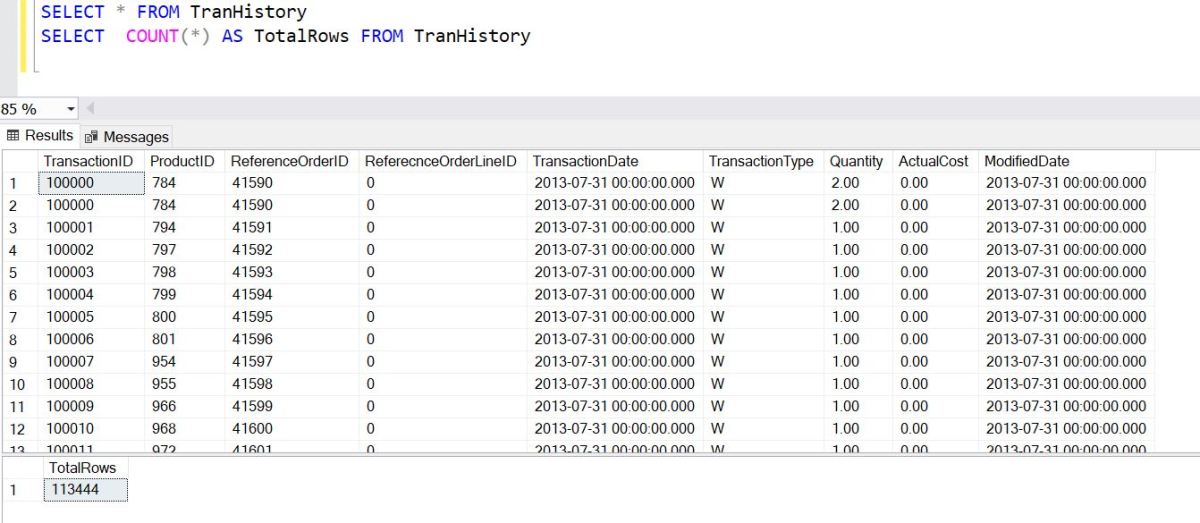

Lets verify, select records from target that is our SQL table – TranHistory, also counts the number of rows that table does have.

You can see, table has same data, and rows count.

SELECT * FROM TranHistory SELECT COUNT(*) AS TotalRows FROM TranHistory

Load Data in Batches Using BULK INSERT

You can also perform Bulk Insert in form of Batches by specifying a BATCHSIZE in BULK INSERT statement for the number of rows in a batch.

Each batch is copied to the server as one transaction so in case of a failure everything does not get rolled back. By default, all data in the specified data file is one batch.

Lets modify the above BULK INSERT statement and specify batch size =40000, means data will be loaded in increment of 40000.

You can see message, data is loaded in batches that is increment of 40000.

Also Read..

![]()